If you’re implementing new technology in your workplace, you want the rollout to go as smoothly as possible — which means getting buy-in from the employees who will use the technology.

Most employees understand that in the modern workplace, technological change happens continually. But implementing new technologies still can cause problems for workers, who must balance emerging new technology in the workplace with still getting tasks and projects accomplished.

If you’re implementing new technology in your workplace, you want the rollout to go as smoothly as possible — which means getting buy-in from the employees who will use the technology. To get your team on board, consider these tips for taking workers’ needs into account.

Give managers the tools they need to drive performance

The all-in-one platform for managing meetings, feedback, goals, and employee recognition, supercharged with behavioral insights.

Communicate your intentions.

Communicating early and often is vital. Whatever the new technology you’re introducing — such as workplace assessments or other tools like Trello, Confluence or Slack — you want to provide as much notice as possible to all team members who will use the system. Simply by communicating that a change is projected, you take a step toward obtaining buy-in. And don’t forget to include your remote teams in all communications.

Get input early in the process.

Before you create your formal implementation plan, take time to survey workers about their needs and their perceptions of the new technology. If talking with every team member is not feasible, try to at least sample a variety of departments in your organization to get a feel for how the new solution may affect workflow. Tech-savvy Millennials, especially, will want a voice in any technology implementation — and they will have valuable input to offer.

Create a workable plan.

After gathering information from various stakeholders, you can put together a plan that includes a reasonable rollout timeline. Without a clear, step-by-step plan, your implementation may fail or cause more problems than it solves for your team. While you cannot anticipate every contingency, you can make an effort to consider various usage scenarios and what could go wrong.

Don’t rush the implementation.

Once you’ve put together a schedule that suits most of your team, don’t cave to the temptation to speed up the implementation. Many factors will arise during rollout that you could not have anticipated in the planning phase, and you need plenty of time to respond while not placing undue stress on your workers.

Provide adequate training.

Your implementation isn’t complete once the software is installed and tested. Providing sufficient, ongoing training is critical for keeping your employees engaged — and for getting buy-in for future rollouts. Workers do not want to have to relearn their jobs each time you introduce a new technology; provide customized training that allows them to implement the new solution into existing workflow with as little inconvenience as possible.

Have a plan B.

What if the new technology fails during or shortly after implementation? If you want employees to support future technology changes, a backup plan is critical. Whether you use onsite backups or virtual servers, you’ll need a method for rolling back to your previous setup quickly.

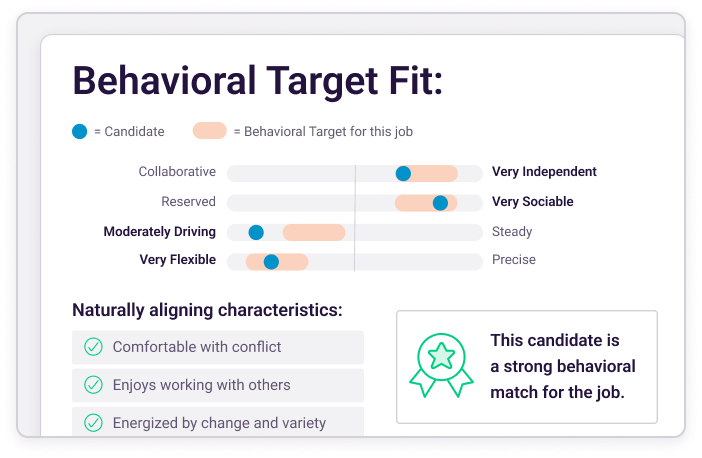

If you’re ready to introduce something new and exciting at your company, check out PI Insights, a growing collection of tools allowing managers and employees to easily understand workplace behaviors, be more self-aware, work better together, and make everyday workplace interaction more purposeful.